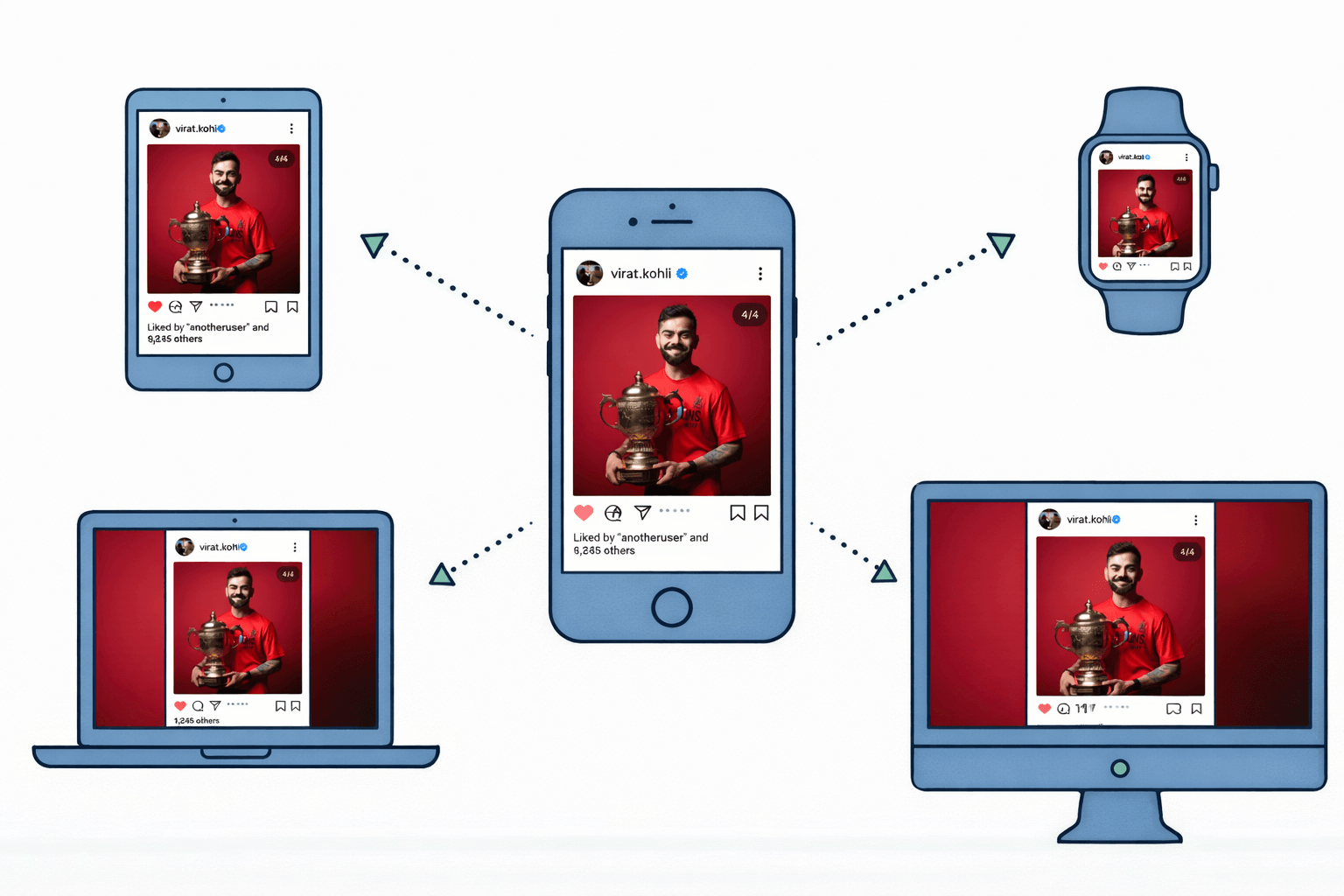

June 2025. The cricket world exploded.

Royal Challengers Bengaluru had just won their first-ever IPL trophy after 18 years of heartbreak. Virat Kohli, the face of RCB, posted a photo holding the gleaming trophy—his dream finally realized.

What happened next was extraordinary.

Within 4 minutes, the post hit 1 million likes. By the 7-minute mark, Instagram was on fire. Fans from Mumbai to Manipur, from college students to corporate employees, everyone was double-tapping simultaneously. The historic victory against Punjab Kings wasn't just a sporting triumph—it became a digital phenomenon.

Picture this: Thousands of fans, all across India, simultaneously double-tapping their screens. Each tap registers as a like. The number climbs from 10,000 to 100,000 to a million in mere minutes. But here's the mind-blowing part—Instagram doesn't crash. It doesn't freeze. It doesn't even slow down.

How does Instagram handle this tsunami of activity? How do millions of likes get counted, stored, and displayed in real-time without the entire system collapsing? Let's pull back the curtain and see the fascinating machinery working behind that simple heart icon.

The Simple Truth About Instagram's Like System

When millions of people like a post on Instagram, the app doesn't process each like one by one on a single computer. Instead, it uses a brilliant network of hundreds of servers working together, temporarily storing likes in super-fast memory (called cache), and updating the permanent database in the background.

Think of it like a concert venue handling thousands of ticket buyers. Instead of one counter, they open dozens of counters, use quick digital scanners, and update the central system later. Instagram does something similar, but at a scale that's hard to imagine.

How Instagram Handles Millions of Likes: The Complete Journey

Step 1: The Moment You Tap "Like"

When you double-tap that post of Virat Kohli's century celebration, something happens in milliseconds.

Your Instagram app sends a tiny request over the internet. This request is basically a message saying: "Hey Instagram, user @yourname just liked post #12345." It's a simple signal, but multiply it by a million users doing it simultaneously, and you've got a massive flood of requests.

Here's what most people don't realize: your like doesn't go directly to Instagram's main computer. That would be like sending all concert-goers through a single entrance—total chaos.

Real-world analogy: It's like raising your hand in a stadium. Your signal goes to the nearest camera operator, not directly to the main control room.

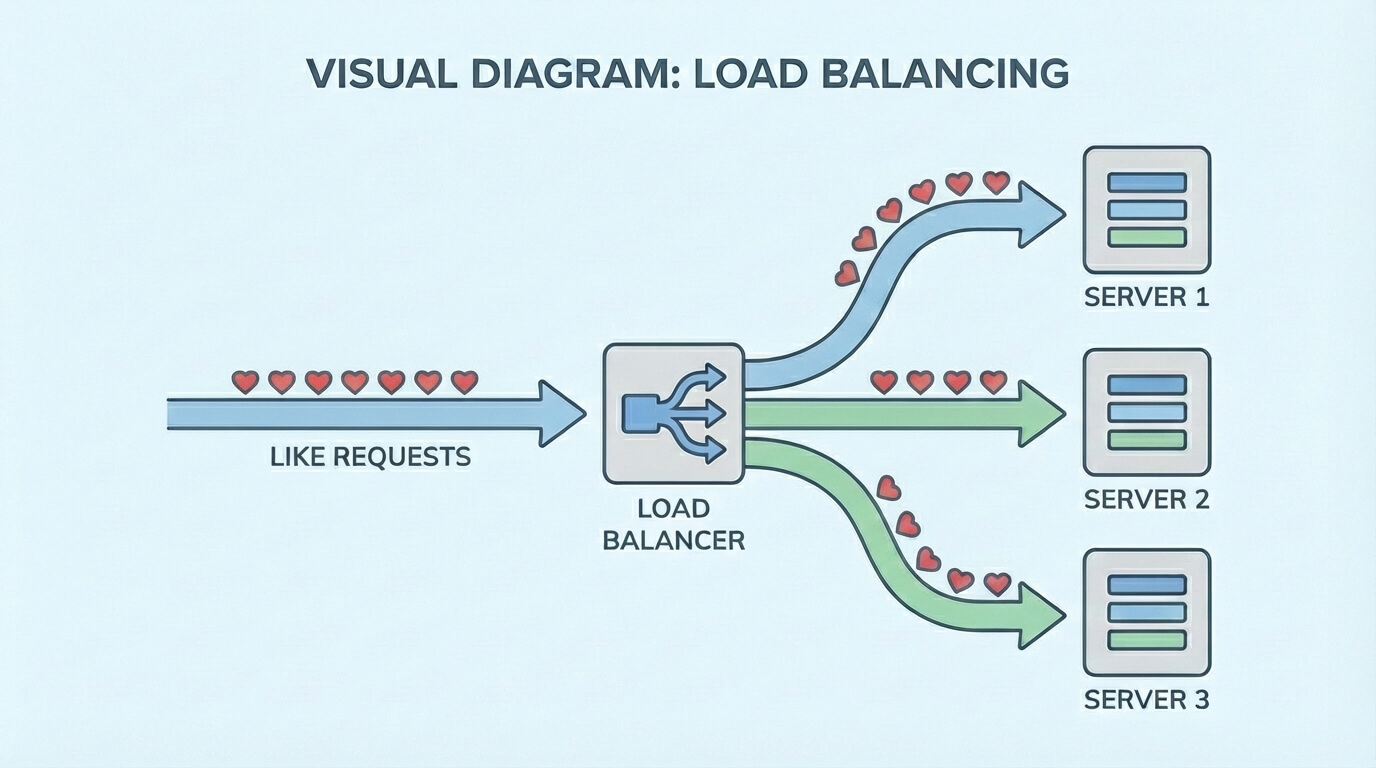

Step 2: The Traffic Controller (Load Balancing in Action)

Before your like request reaches Instagram's servers, it passes through something called a load balancer—think of it as a super-smart traffic cop.

Imagine a shopping mall during a festival sale. If everyone rushed to one billing counter, there'd be chaos. Smart malls open 20-30 counters and direct customers to the least crowded one. Instagram's load balancer does exactly this with millions of like requests.

When Virat's post goes viral:

Request 1 goes to Server A in California

Request 2 goes to Server B in Singapore

Request 3 goes to Server C in Ireland

And so on...

This distributed system ensures no single server gets overwhelmed. It's the secret sauce that prevents Instagram from crashing when millions of people like a post simultaneously.

Step 3: The Speed Demon (Caching System)

Here's where Instagram gets really clever.

When your like arrives at a server, Instagram doesn't immediately write it to the main database. Why? Because databases are like filing cabinets—reliable but slow. When millions of likes flood in, constantly opening and closing that cabinet creates a bottleneck.

Instead, Instagram uses something called cache (specifically, systems like Redis or Memcached). Think of cache as a temporary notepad that's lightning-fast.

Real-life example:

When you're taking orders at a busy restaurant, you don't run to the kitchen after every single order. You jot down 5-10 orders on your notepad, then take them all at once. Much faster, right?

Instagram does this with likes:

Like #1 → Stored in cache ⚡

Like #2 → Stored in cache ⚡

Like #3 → Stored in cache ⚡

After a few seconds → All likes moved to permanent database

The like count you see? It's being pulled from this super-fast cache, not from the slow database. That's why it updates almost instantly.

Step 4: The Permanent Record (Database Update)

While the cache handles the real-time action, Instagram quietly moves likes to the permanent database in the background.

This happens in batches. Instead of saving each like individually (which would be slow), Instagram groups hundreds or thousands of likes and saves them together. It's like batch-processing in a factory.

The main database uses powerful systems (like Cassandra or PostgreSQL with sharding) that can handle massive amounts of data across multiple machines.

Key point: By the time you see "1.2M likes" on Virat's post, those likes are safely stored in Instagram's permanent database, distributed across multiple data centers around the world.

Step 5: The Magic Number (Real-Time Count Update)

You refresh the page. The count jumps from 1.1M to 1.3M. How does Instagram update this number so smoothly for millions of viewers?

Instagram uses a technique called counter aggregation. Instead of counting every single like from scratch each time (imagine counting a million marbles one by one!), it maintains running totals.

When you view the post:

Your app requests the like count

Instagram checks the cache (remember, super fast!)

It pulls the current aggregated count

Sends it back to your screen in milliseconds

Sometimes you might notice a slight delay between when you like a post and when the count updates. That's normal—Instagram prioritizes eventual consistency over perfect real-time accuracy. Your like is registered, but it might take 1-2 seconds to reflect in the public count.

Step 6: The Expansion Power (Scalability at Work)

What happens when a post goes unexpectedly viral, like Virat's celebration post?

Instagram's system automatically scales. This is called horizontal scaling—instead of making one server more powerful (vertical scaling), Instagram adds more servers to share the load.

Simple analogy:

During rush hour, Uber doesn't make one car bigger; it brings more cars onto the road. Instagram brings more servers online when traffic spikes.

The system monitors traffic patterns:

Normal day: 100 servers handling likes

Viral post detected: System spins up 500 more servers

Crisis averted ✅

This is all automated. No human engineer is sitting there manually adding servers. Smart algorithms detect the surge and respond instantly.

Following the Complete Journey: A Real-Life Example

Let's trace what happens when Priya from Mumbai likes Virat Kohli's viral post:

10:05:23 AM - Priya double-taps the post on her phone

10:05:23.1 AM - Her Instagram app sends a like request over the internet

10:05:23.2 AM - The request hits Instagram's load balancer in Singapore

10:05:23.3 AM - Load balancer routes it to Server #4521 (least busy)

10:05:23.4 AM - Server stores the like in Redis cache (lightning fast!)

10:05:23.5 AM - Counter in cache increases from 1,234,567 to 1,234,568

10:05:23.6 AM - Priya's screen shows the filled heart icon ❤️

10:05:24 AM - Other users viewing the post see the updated count

10:05:30 AM - Background process saves Priya's like to permanent database

Total time from tap to display: Less than 1 second

Meanwhile, thousands of other people are liking the same post. Each like follows the same journey through different servers, but they all end up in the same aggregated count that the world sees.

Why This System Works So Well

Instagram's real-time likes processing isn't magic—it's smart engineering. Here's what makes it bulletproof:

Distributed servers across the globe - No single point of failure. If one server crashes, others keep working.

Caching layer for speed - Temporary fast storage handles the initial rush, preventing database overload.

Load balancing intelligence - Requests automatically routed to the least busy servers, like a smart GPS avoiding traffic.

Batch processing in background - Likes saved to permanent database in groups, not one at a time.

Horizontal auto-scaling - System adds more servers automatically when traffic spikes.

Eventual consistency model - Your like is registered immediately, but the exact count might take a moment to synchronize globally.

What Developers Can Learn From Instagram's System

If you're building any application that needs to handle millions of requests, Instagram's like system is a masterclass in scalable system design. Here are the actionable takeaways:

1. Never Hit the Database Directly for High-Frequency Actions

Store fast-changing data (like like counts) in cache first. Use Redis, Memcached, or similar in-memory stores. Your database should receive batched updates, not individual writes.

Practical tip: If you're building a voting app, a live sports scoreboard, or a comment system, implement caching from day one.

2. Design for Distribution From the Start

Don't build your app on a single powerful server. Use multiple smaller servers that work together. This is the foundation of handling millions of requests.

Practical tip: Use containerization (Docker) and orchestration tools (Kubernetes) to easily scale your application horizontally.

3. Implement Smart Load Balancing

Use load balancers (Nginx, HAProxy, AWS ALB) to distribute incoming requests evenly. This prevents any single server from becoming a bottleneck.

Practical tip: Cloud platforms like AWS, Google Cloud, and Azure offer built-in load balancing. Use them.

4. Accept Eventual Consistency

For social features like likes, comments, or views, users don't need to see the exact count every millisecond. A slight delay is acceptable if it means your system stays fast and reliable.

Practical tip: Inform users when counts are approximate ("2.1M likes") rather than claiming perfect precision.

5. Monitor and Auto-Scale

Set up monitoring to detect traffic spikes and automatically add resources. Don't wait for your system to crash before scaling.

Practical tip: Use services like AWS Auto Scaling, Google Cloud Autoscaler, or Azure Scale Sets.

Developer Insight: The Technology Behind the Scenes

For those curious about the technical stack that makes Instagram's like system possible, here's a simplified breakdown:

Load Balancers

These are the traffic cops of the internet. Popular choices include:

HAProxy - Fast and reliable

Nginx - Versatile and widely used

AWS Elastic Load Balancing - Cloud-native solution

They use algorithms like Round Robin (distribute requests equally) or Least Connections (send to the server with fewest active requests).

Cache Systems (The Speed Demons)

Platforms like Instagram typically use technologies such as Redis, Memcached for caching. These are in-memory data stores that can handle millions of operations per second.

Why are they so fast? Because data is stored in RAM (temporary memory) rather than on disk. Reading from RAM is 100x faster than reading from disk.

The tradeoff: Cache data disappears if the server restarts, which is why permanent database storage is still necessary.

Distributed Databases

For permanent storage, Instagram uses distributed databases that spread data across multiple machines. Technologies like:

Cassandra - Great for handling massive write loads

PostgreSQL with sharding - Traditional database split across servers

RocksDB - Used by Facebook/Meta for key-value storage

These databases ensure your like is saved permanently across multiple data centers, so even if one entire data center goes offline, your data is safe.

Message Queues

When likes need to move from cache to database, Instagram uses message queues (like Apache Kafka or RabbitMQ) to handle the flow smoothly without overwhelming the database.

Think of it as a waiting line at a restaurant—orders (likes) queue up and get processed at a manageable pace.

The Bottom Line: Instagram's Billion-Dollar Architecture

When you tap that heart icon on Virat Kohli's post, you're interacting with one of the most sophisticated distributed systems on the planet.

Instagram doesn't rely on one powerful server in Silicon Valley. Instead, it uses a network of thousands of servers spread across continents, working together like a perfectly synchronized orchestra.

The core principles:

Distribute the load (don't put all eggs in one basket)

Cache aggressively (speed over perfection)

Process in batches (efficiency over real-time individual handling)

Scale automatically (prepare for the unexpected)

This is how systems like Instagram are designed to handles millions of likes without breaking a sweat. It's the same architecture that powers Netflix's streaming, Amazon's shopping cart, and Google's search results.

Final thought: The next time you casually double-tap a post, remember—you've just triggered a chain reaction across multiple continents, involving load balancers, cache systems, distributed databases, and hundreds of servers working in perfect harmony. All in less time than it takes to blink.

That's the beautiful complexity hidden behind Instagram's simple heart icon. And now you know exactly how it works.