The Evolution of Computers: From Charles Babbage's Dream to Modern AI

The Story Behind the Screen You're Reading This On

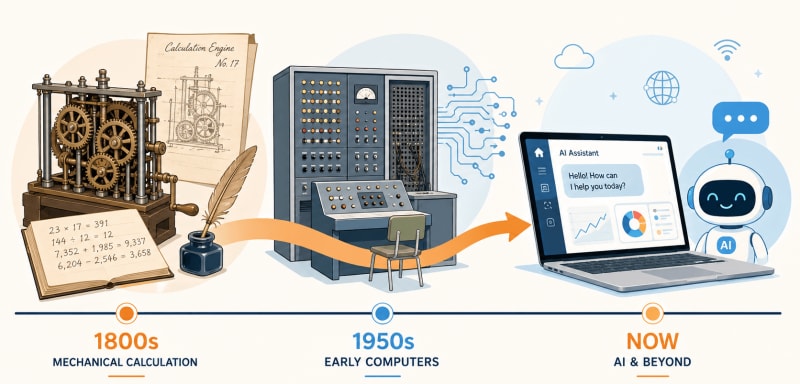

The device you're using right now, whether it's a phone, laptop, or tablet, has a story that began over 200 years ago in a cramped London workshop.

A brilliant mathematician named Charles Babbage was sketching designs for a machine that could think. Well, sort of. He wanted to build a mechanical device that performed calculations automatically using only gears, levers, and metal wheels.

People thought he was crazy. His machine was never fully built in his lifetime, but his idea sparked a revolution that would transform human civilisation.

From Babbage's sketches to room-sized electronic monsters to pocket computers to AI that can paint, write, and even drive cars, this is the evolution of computers. And honestly? It's one of the wildest transformation stories in history.

The Beginning: Charles Babbage's Impossible Dream

In the early 1800s, mathematical tables were calculated by hand. Human "computers" (yes, that was a job title) would sit for hours doing repetitive arithmetic. Mistakes were common. And dangerous—navigation charts with errors could sink ships.

Charles Babbage, a British mathematician, was fed up with these errors. In 1822, he proposed the Difference Engine—a mechanical calculator capable of automatically computing mathematical tables.

But he didn't stop there.

In 1837, Babbage designed something far more ambitious: the Analytical Engine. This machine had everything a modern computer has conceptually:

Input (punch cards, borrowed from textile looms)

Processor (the "mill" where calculations happened)

Memory (the "store" where numbers were kept)

Output (printed results)

Here's the kicker: This was entirely mechanical. No electricity. Just brass gears, levers, and steam power.

It was never fully built—too expensive, too complex for the technology of the time. But Babbage's design laid the foundation for everything that followed.

Why he's called the "Father of the Computer":

He envisioned a programmable, general-purpose computing machine over a century before the first electronic computer was built.

Fun fact: Ada Lovelace, a mathematician who worked with Babbage, wrote what many consider the world's first computer program—instructions for the Analytical Engine to calculate Bernoulli numbers. She saw potential in the machine that even Babbage didn't fully grasp.

The Electronic Revolution: ENIAC and the 1940s

Fast forward to World War II. The need for rapid calculations—ballistics tables, code-breaking, simulations—drove the development of electronic computers.

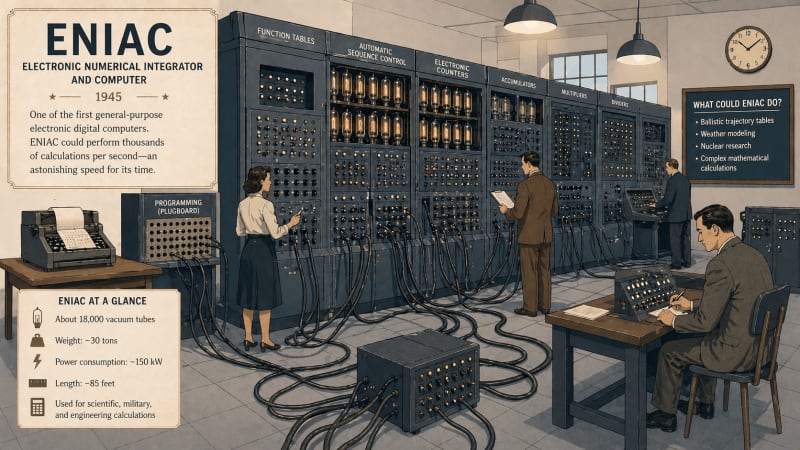

In 1945, the ENIAC (Electronic Numerical Integrator and Computer) was unveiled at the University of Pennsylvania.

What made ENIAC special?

First general-purpose electronic computer

Used vacuum tubes instead of mechanical parts

Could perform 5,000 calculations per second (mind-blowing for the time)

The catch?

Size: Filled an entire room (1,800 square feet)

Weight: 30 tons

Power consumption: 150 kilowatts (enough to power 150 homes)

Heat: Generated so much heat that it needed industrial cooling

Programming ENIAC was brutal. No keyboard. No screen. Engineers had to physically rewire the machine using cables and switches for each new task. It could take days to set up a single program.

But it worked. And it proved that electronic computing was possible.

Other early machines:

Colossus (1943): British code-breaking computer

UNIVAC I (1951): First commercial computer, famously predicted Eisenhower's election victory

The Five Generations of Computers (The Real Evolution)

Computer historians classify the evolution into five generations—each defined by the core technology that powers it.

1st Generation (1940s–1950s): Vacuum Tubes

Technology: Vacuum tubes (glass tubes that controlled electrical signals)

Characteristics:

Massive size

Generated intense heat

Frequent breakdowns

Limited memory (measured in kilobytes)

Programmed using machine language (binary code)

Examples: ENIAC, UNIVAC, IBM 701

Think of it like: Using giant lightbulbs to do math. Hot, fragile, and inefficient—but revolutionary.

2nd Generation (1950s–1960s): Transistors

Technology: Transistors (tiny semiconductor devices that replaced vacuum tubes)

Characteristics:

Smaller size

More reliable

Generated less heat

Faster processing

Used assembly language (slightly easier than binary)

Examples: IBM 1401, CDC 1604

The shift: Transistors were invented at Bell Labs in 1947. By the late 1950s, they replaced vacuum tubes, making computers faster and more practical.

Think of it like: Replacing giant lightbulbs with tiny switches. Same function, way better execution.

3rd Generation (1960s–1970s): Integrated Circuits

Technology: Integrated Circuits (ICs)—multiple transistors packed onto a single silicon chip

Characteristics:

Even smaller

Cheaper to produce

More processing power

High-level programming languages (COBOL, FORTRAN)

Early operating systems

Examples: IBM System/360, DEC PDP-8

The breakthrough: Instead of soldering thousands of individual transistors, engineers could etch entire circuits onto a tiny chip. This is what made personal computers possible.

Think of it like: Instead of building with individual LEGO bricks, you get pre-assembled modules. Faster. More efficient.

4th Generation (1970s–Present): Microprocessors

Technology: Microprocessors—an entire CPU (central processing unit) on a single chip

Characteristics:

Compact enough for personal use

Affordable

Graphical user interfaces (GUI)

Networking capabilities

Internet integration

Examples: Intel 4004 (1971—first microprocessor), Apple II, IBM PC, modern laptops, smartphones

The revolution: Computers went from room-sized machines to desktop devices. Then laptops. Then pocket-sized smartphones.

1981: IBM PC launched. Personal computers became mainstream.

1984: Apple Macintosh introduced the mouse and GUI (no more command-line typing).

1990s: Internet explosion. Computers are connected globally.

Think of it like: Computers became appliances. Like buying a toaster or TV—something anyone could own and use.

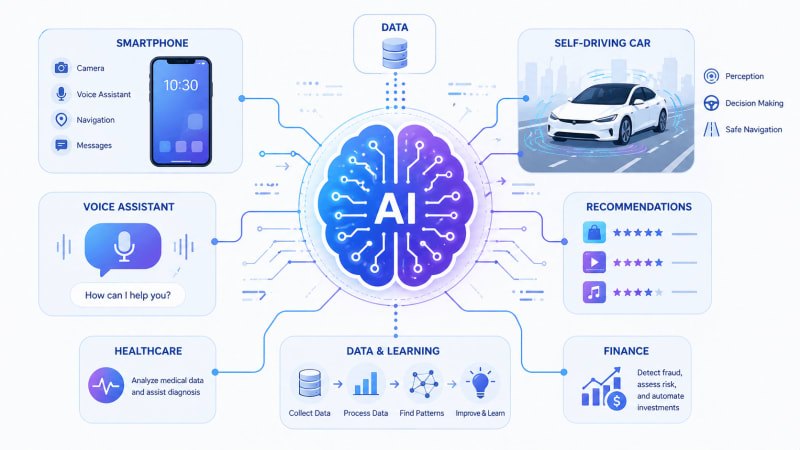

5th Generation (Present–Future): Artificial Intelligence

Technology: AI, machine learning, natural language processing, neural networks

Characteristics:

Self-learning systems

Voice recognition (Siri, Alexa)

Image recognition

Predictive analytics

Quantum computing (emerging)

Examples: ChatGPT, self-driving cars, recommendation algorithms (Netflix, YouTube), smart assistants

What's different: These computers don't just execute programmed instructions—they learn from data and improve over time.

Think of it like: Computers that don't just follow recipes—they taste the food, adjust seasonings, and create new dishes on their own.

The Personal Computer Revolution & The Internet Boom

Computers Come Home

In the 1970s and 80s, computers stopped being exclusive tools for scientists and businesses. They entered living rooms.

Key players:

Apple (1976):

Steve Jobs and Steve Wozniak built the Apple I in a garage. The Apple II (1977) became the first mass-market personal computer with colour graphics.

Microsoft (1975):

Bill Gates and Paul Allen created software for personal computers. MS-DOS became the operating system for IBM PCs. Windows (1985) brought graphical interfaces to the masses.

IBM (1981):

The IBM PC legitimized personal computers for business use. It became the industry standard.

The Internet Changes Everything

The internet existed in academic and military circles since the 1960s (ARPANET). But in the 1990s, it exploded into public life.

1991: The World Wide Web was invented by Tim Berners-Lee

1998: Google launched

2004: Facebook launched

2007: iPhone launched (smartphones put computers in pockets)

Suddenly, computers weren't isolated machines. They were connected nodes in a global network. Information, communication, commerce—everything accelerated.

Modern Era: Artificial Intelligence and Machine Learning

AI isn't new. The term was coined in 1956. But for decades, it was mostly theoretical—computers weren't powerful enough.

That changed in the 2010s.

What is AI (in simple terms)? Artificial Intelligence is when computers perform tasks that normally require human intelligence, such as recognising faces, understanding speech, making decisions, and learning from experience.

Machine Learning is a subset of AI where computers learn patterns from data without being explicitly programmed for every scenario.

Real-world examples you use daily:

Netflix recommendations: AI analyses what you watch and suggests similar content.

Google Search: AI understands your query and ranks results.

Spam filters: AI learns to identify junk emails.

Voice assistants: Siri, Alexa, Google Assistant use natural language processing.

Self-driving cars: AI processes camera feeds and makes driving decisions in real-time.

ChatGPT (and similar): AI generates human-like text based on prompts.

Why now?

Three factors converged:

Massive data (internet-generated terabytes daily)

Powerful processors (GPUs, specialized chips)

Better algorithms (deep learning, neural networks)

AI isn't sci-fi anymore. It's embedded in everyday life.

How Computers Changed the World

Computers didn't just change technology—they rewired society.

Education

Online courses (Khan Academy, Coursera)

Research databases are accessible instantly.

Simulations for science experiments

Distance learning (especially vital during COVID-19)

Communication

Email replaced snail mail.

Video calls (Zoom, FaceTime) connected families across continents.

Social media created new forms of community (for better and worse)

Business

E-commerce (Amazon, online shopping)

Digital payments

Remote work

Data analytics driving decisions

Entertainment

Streaming (Netflix, Spotify)

Video games (from Pong to photorealistic VR)

Digital art, music production

Healthcare

Medical imaging (MRI, CT scans)

Electronic health records

AI-assisted diagnosis

Telemedicine

Bottom line: There's almost no aspect of modern life untouched by computers.

The Other Side: Challenges and Reality Check

Computers gave us incredible power. But power comes with problems.

Privacy Concerns

Every click, search, and like is tracked. Companies collect data. Governments monitor activity. Your digital footprint is permanent.

Over-Dependence

When systems crash, life halts. GPS fails? People get lost. Internet outage? Work stops. We've become too reliant.

Job Displacement

Automation replaces human workers. Manufacturing, customer service, even creative fields—AI is encroaching. What happens to people whose skills become obsolete?

Misinformation

The internet spreads information fast—including false information. Deepfakes, fake news, and echo chambers. Truth becomes harder to find.

AI Risks

Bias: AI learns from biased data, perpetuating discrimination.

Control: As AI becomes more powerful, who controls it?

Weaponization: Autonomous weapons, surveillance states.

We're not anti-technology here. Just realistic. Computers are tools. Powerful tools. And like any tool, they can build or destroy depending on how we use them.

The Future: Where Are Computers Headed?

Quantum Computing

Current computers use bits (0 or 1). Quantum computers use qubits, which can be 0, 1, or both simultaneously (superposition).

What does that mean?

Quantum computers could solve problems in seconds that would take current supercomputers thousands of years. Drug discovery, climate modelling, cryptography—quantum computing could revolutionise them all.

Companies like IBM, Google, and startups are racing to build practical quantum machines.

AI Everywhere

AI will become even more embedded:

Personal AI assistants managing your schedule, finances, and health

AI tutors customise education to each student.

AI doctors assisting in diagnosis.

Creative AI collaborating with artists, writers, and musicians.

Brain-Computer Interfaces

Companies like Neuralink are developing chips that connect directly to the brain. Control devices with thoughts. Restore mobility to paralysed individuals. Upload knowledge?

Sounds like sci-fi. But the early prototypes exist.

Edge Computing

Instead of sending data to distant servers (the cloud), processing happens locally (on your device or a nearby hub). Faster. More private.

One thing's certain: Computers will keep evolving. Faster. Smarter. Smaller. More integrated into our lives.

FAQs About the Evolution of Computers

Who invented the computer?

No single person invented the computer. Charles Babbage designed the first mechanical computer concept (1830s). The first electronic computer (ENIAC) was built by John Presper Eckert and John Mauchly in 1945. Modern computers evolved through contributions from thousands of engineers and scientists.

What is ENIAC?

ENIAC (Electronic Numerical Integrator and Computer) was the first general-purpose electronic computer, built in 1945. It used vacuum tubes, filled an entire room, and was primarily used for military calculations during World War II.

What are the five generations of computers?

The five generations are: (1) Vacuum Tubes (1940s-50s), (2) Transistors (1950s-60s), (3) Integrated Circuits (1960s-70s), (4) Microprocessors (1970s-present), and (5) Artificial Intelligence (present-future). Each generation brought smaller size, more power, and new capabilities.

What is AI in simple words?

Artificial Intelligence (AI) is the ability of computers to perform tasks that normally require human intelligence, such as recognizing images, understanding speech, making decisions, or learning from experience. Examples include voice assistants (Siri, Alexa), recommendation systems (Netflix), and chatbots.

When did personal computers become popular?

Personal computers became popular in the late 1970s and early 1980s with machines like the Apple II (1977) and IBM PC (1981). The 1990s saw explosive growth as prices dropped and the internet made computers essential for communication and information.

From Gears to Intelligence

Charles Babbage sketched designs for a thinking machine in 1837. He died in 1871 without seeing it built.

Over a century later, engineers created ENIAC—a 30-ton electronic beast that could calculate faster than any human.

Decades after that, computers fit on desks. Then laps. Then pockets.

And now? They learn. They adapt. They create.

The evolution of computers isn't just a technology story. It's a story about human ambition—the drive to build tools that extend our minds, solve impossible problems, and connect us across the planet.

We went from mechanical gears to artificial intelligence in less than 200 years.

Imagine what the next 200 will bring.